I've been thinking about what it means for an AI to understand a design vocabulary, not just an aesthetic. Every text-to-image model can generate "an avant-garde fashion image" or "a dramatic red carpet gown." What none of them can do, out of the box, is respond to the way I actually talk about garments: where the weight sits, how a seam holds one panel against another, what happens when tension releases into fall.

I'm not here to discuss whether training AI to design is right or wrong, and I don't particularly want to defend the efficiencies of synthetic brain power in a one-person operation. This experiment is about expanding the range of possibilities beyond current human limitations, and I use it to find opportunities within my own IP.

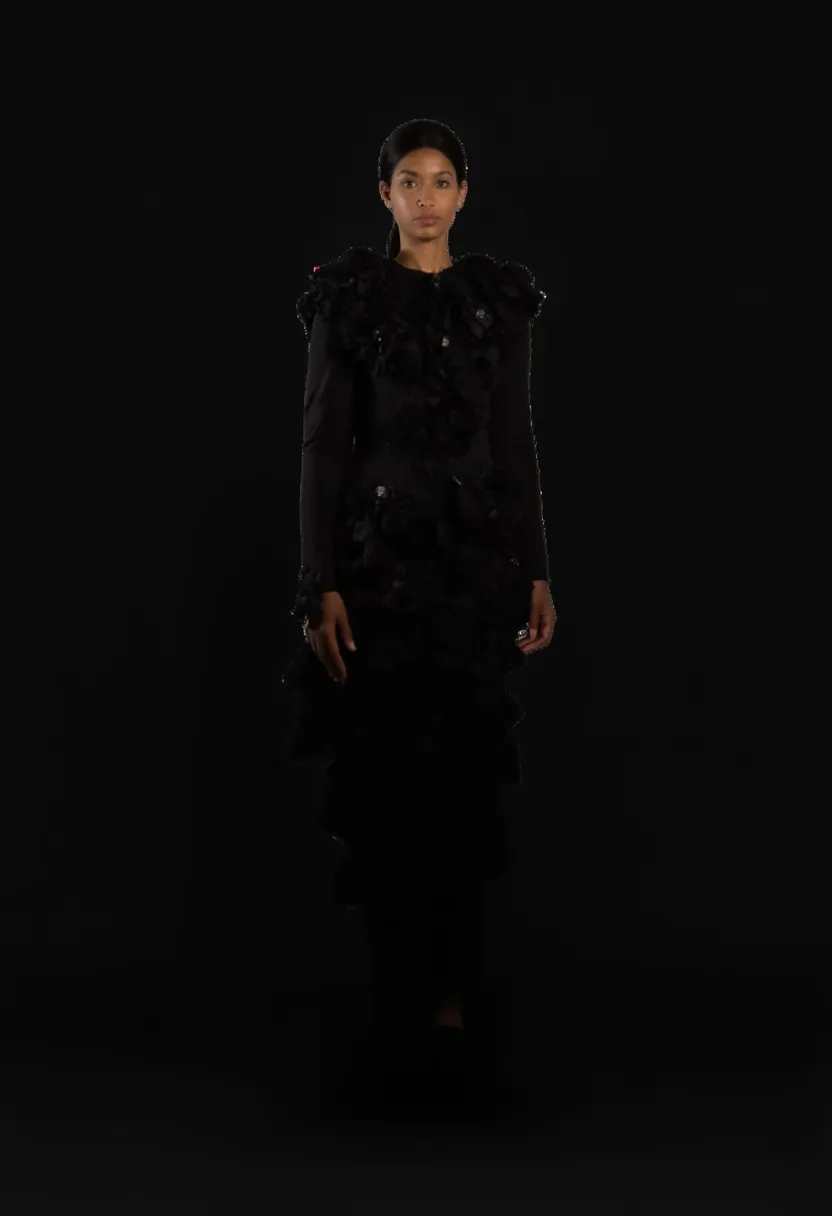

So I built a custom LoRA on my own collection and taught a text-to-image model to think in the construction language of Pisces Rising, my fashion label, where the garments come from visible structure and tension, a material vocabulary that doesn't exist in any generic image model. The whole thing cost about twenty dollars and took about a day.